When an admissions counselor reverses course on the acceptability of applicants using generative AI tools, it’s more than a petty policy flip — it’s a cultural signal. The move marks a transition from a defensive, detection-first posture to one that treats large language models as instruments of literacy and creativity. For college admissions — a domain that has long prized authenticity and penalized gaming — the shift demands new norms, new evaluation methods, and new businesses to mediate the relationship between human intent and machine assistance.

Why a counselor’s change of heart matters

Admissions officers are gatekeepers. Their practices shape not only which students enter a campus but also how young people prepare and present themselves. When a counselor publicly reframes AI use — from cheating to legitimate help — it legitimizes a broader set of behaviors among applicants, counselors, and even families. That ripple effect reaches edtech companies, detection vendors, high school curricula, and university policy teams.

This is not a binary debate. Few stakeholders advocate unregulated, unlimited AI use in high-stakes application materials. Instead, the emerging conversation centers on nuance: distinguishing between surface-level editing, idea generation, and substantive misrepresentation of a student’s abilities. The counselor’s pivot reflects an operational reality: generative AI is ubiquitous, often beneficial, and increasingly impossible to treat as a rare exception.

The practical calculus

Why change a policy? Some practical factors are at play. Detection tools for AI-generated content are imperfect and prone to false positives. Penalizing candidates based on shaky evidence risks unfair outcomes and reputational harm. Meanwhile, AI can help non-native speakers sharpen language, provide structure for first-time writers, and catalyze ideas — all legitimate forms of assistance that many admissions systems already accept when provided by tutors, parents, or writing centers.

Accepting responsibly assisted work reduces adversarial policing and forces institutions to articulate what they value: independent thinking, demonstrated process, or polished prose? That question defines the next wave of admissions practice.

Strategic context within the AI industry

The counselor’s change is both a symptom and a driver of larger market shifts. Generative AI has moved from novelty to infrastructure. LLMs now power writing assistants, tutoring platforms, and tools that help students brainstorm project ideas. The AI industry, for its part, is adapting: vendors are introducing features focused on provenance (who authored what), fine-grained controls for reuse, and API hooks for institutional oversight.

Two industry dynamics matter here.

- Feature convergence: Edtech platforms increasingly bake LLM capabilities into their offerings. Institutions that tolerate AI use will partner with providers to ensure safe, equitable access and to capture process metadata that evidences student authorship.

- Detection arms race: Developers of AI-generated content detectors respond to model improvements with updated heuristics, and model providers iterate to make outputs more human-like. The result is a continuous cycle of adaptation — costly, imperfect, and ultimately untenable as a long-term enforcement strategy.

Risks and equity considerations

Normalizing AI assistance without guardrails risks amplifying existing inequalities. Students with access to premium AI tools, coaching on how to use them well, or paid admissions consultants will gain an advantage over those who don’t. Left unchecked, generative AI could entrench a new form of “resource-based” meritocracy.

Other risks include:

- Hallucinations: AI can invent facts, misrepresent experiences, or produce convincing-but-false claims that mislead reviewers.

- Bias amplification: Models trained on biased data may reproduce stereotypes or favor certain cultural frames of reference.

- Credential dilution: If personal statements become heavily AI-assisted, admissions committees may lose a reliable signal of individuality.

Addressing these risks requires more than bans. It requires deliberate policy design focused on access, transparency, and robust assessment techniques that emphasize process over polished output.

Opportunities for redesigning assessment

The arrival of ChatGPT and its peers invites creative rethinking of what admissions materials should look like. A future-proof admissions process could emphasize:

- Process evidence: Draft histories, revision logs, and learning journals that show how a student’s ideas evolved.

- Project-based portfolios: Artifacts such as coding repositories, video presentations, or research notes that are harder to fabricate through a one-off text generation.

- Timed, in-person evaluations: Short writing tasks under controlled conditions that complement longer-form submissions.

- AI literacy demonstration: Requiring applicants to briefly describe how they used AI in their work — what prompts they used, what they accepted or rejected — turning tool use into an evaluative lens rather than a stealthy advantage.

These methods raise operational challenges, but they also open space for fairer comparisons and richer understanding of applicants’ capabilities.

Technological responses: watermarking, provenance, and process APIs

Technology providers are developing mechanisms to make AI use visible. Watermarking attempts to embed detectable signals in generated text; provenance systems attach metadata to content describing its origin; and process APIs can capture interactions between users and models.

Each approach has trade-offs. Watermarks can be evaded or removed. Provenance requires widespread adoption and standardized metadata formats. Process APIs raise privacy concerns; capturing how a student generated an essay requires careful consent frameworks and data governance. Any technological fix alone cannot resolve the ethical and equity issues — but combined with policy, it can support more transparent evaluation.

Business implications and emerging markets

The counselor’s volte-face creates demand for new services. Expect growth in several verticals:

- AI-aware admissions consulting: Advising students on ethical AI use, documenting processes, and preparing applicants to explain their workflow.

- Institutional AI platforms: Campus-scale tools that provide sanctioned AI assistance and record usage logs for admissions review.

- Detection and provenance vendors: Firms offering robust metadata standards, secure logging, and verification services tailored for academic contexts.

- Edtech curriculum providers: Courses on AI literacy for high schoolers that cover prompt engineering, critical evaluation of AI outputs, and ethical considerations.

For universities, there’s an opportunity to partner with platform providers to democratize access to high-quality AI tools. Doing so mitigates equity concerns and positions institutions as active educators of AI competence rather than passive gatekeepers.

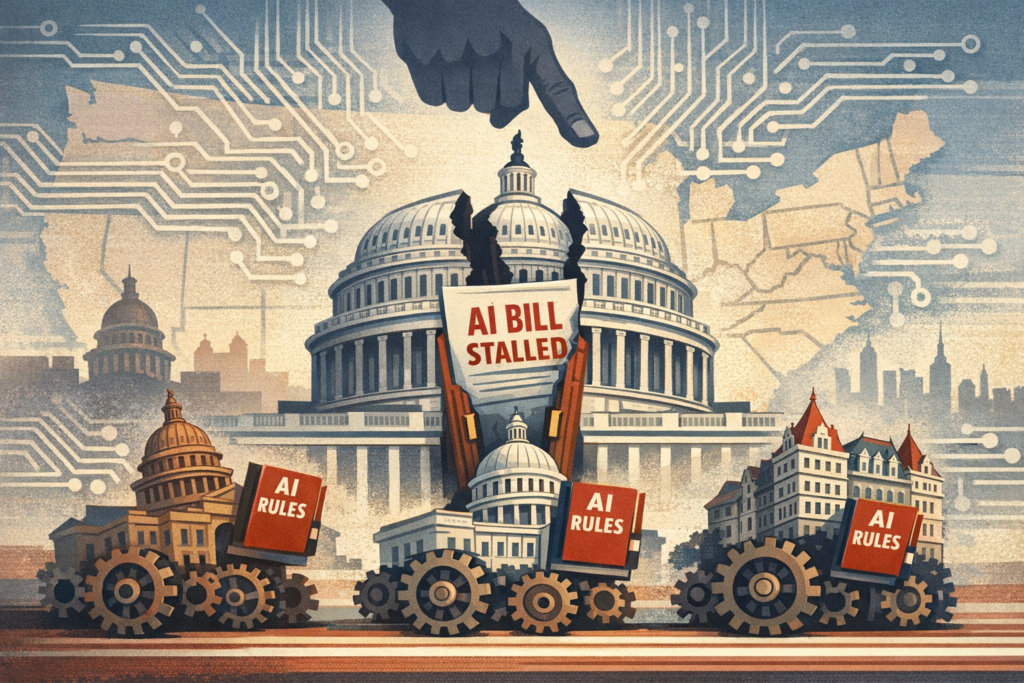

Regulatory and policy trajectories

Policy responses will likely be hybrid and iterative. Possible approaches include:

- Guidelines from accreditation bodies and education departments that define acceptable AI use in application materials.

- Transparency mandates requiring applicants to disclose significant AI assistance, similar to citation standards in research.

- Funding or subsidies to ensure equitable access to institutional AI tools for under-resourced students.

Regulators will face pressure to balance academic integrity with fairness and innovation. Heavy-handed bans risk being circumvented; permissive frameworks risk rewarding wealth. The pragmatic path will emphasize disclosure, education, and support systems that level the playing field.

Three plausible futures

From here several trajectories are possible. Each is internally coherent and depends on how institutions, industry, and regulators act.

1. Integration and evidence

Universities accept AI-assisted submissions if accompanied by process evidence. AI literacy becomes part of secondary-school curricula. Edtech platforms standardize provenance metadata. Admissions move toward richer portfolios and in-person verification. This path treats AI as a ubiquitous tool and redesigns assessment to capture genuine competence.

2. Ban-and-enforce

Some elite institutions attempt strict bans, deploying detection tools and imposing penalties. The result is adversarial dynamics: students and consultants develop workarounds, and enforcement costs rise. This path preserves traditional signals in the short term but is unsustainable at scale.

3. Market stratification

Institutions diverge: some embrace AI and partner with platforms to democratize access; others ban it, preserving older modes of evaluation. Meanwhile, a new market emerges for premium AI-assisted application services, increasing stratification. Admissions credibility becomes a signaling game where policies are as much brand decisions as pedagogical ones.

What the counselor’s pivot signals for stakeholders

The practical takeaway is that AI is no longer an external threat to be policed; it’s an educational technology that must be integrated thoughtfully. For students, transparency and AI literacy will be assets. For admissions professionals, the shift demands new evaluative frameworks and stronger partnerships with educators and technologists.

For policymakers and vendors, the imperative is to build tools and rules that prioritize equity and process evidence over brittle detection regimes. The next decade will be defined not by whether students use AI, but by how institutions translate that use into meaningful assessments of talent and potential.

Change in a single counselor’s stance is a small event. Its significance lies in timing: it comes as generative AI matures into a default tool of composition and collaboration. The smarter response is not to pretend the technology isn’t present, but to craft norms, craft assessments, and craft systems that reflect the reality of human-machine partnership. That work will determine whether admissions continue to reward privilege, or evolve to recognize the new literacies students bring to the table.