As artificial intelligence tools migrate from research labs into the palms of billions, a new set of questions moves from theoretical to urgent: what happens to our minds when machines increasingly shoulder the tasks of thinking, remembering and deciding? Recent research suggests the shift won’t be abrupt or dramatic like a dystopian takeover; instead, it may be a slow, cumulative rewiring of human cognition that unfolds over years or decades. That gradual erosion—subtle declines in memory retention, attention span and problem-solving habits—poses dilemmas for tech companies, workplaces, educators and regulators alike.

Small habits, large effects: the cognitive ripple of everyday AI

People adopt AI assistants for convenience: autocomplete for writing, recommendation systems for media, calculators and search engines for facts. These conveniences reconfigure the cognitive economy of routine tasks. When you offload remembering phone numbers, drafting boilerplate emails, or mapping a route to a navigation app, you free mental bandwidth in the immediate term—but habitual outsourcing can atrophy the very neural pathways that support those skills.

Think of cognition as a muscle. Occasional assistance is restorative; chronic reliance risks atrophy. The new research highlights patterns consistent with this hypothesis: modest but measurable declines in certain memory and attention tests among frequent AI users, alongside behavioral shifts such as decreased initiative in planning and critical evaluation. These are not catastrophic losses, but they accumulate across years and across populations, producing collective effects that shape labor markets and social norms.

Why gradual change is harder to see—and to regulate

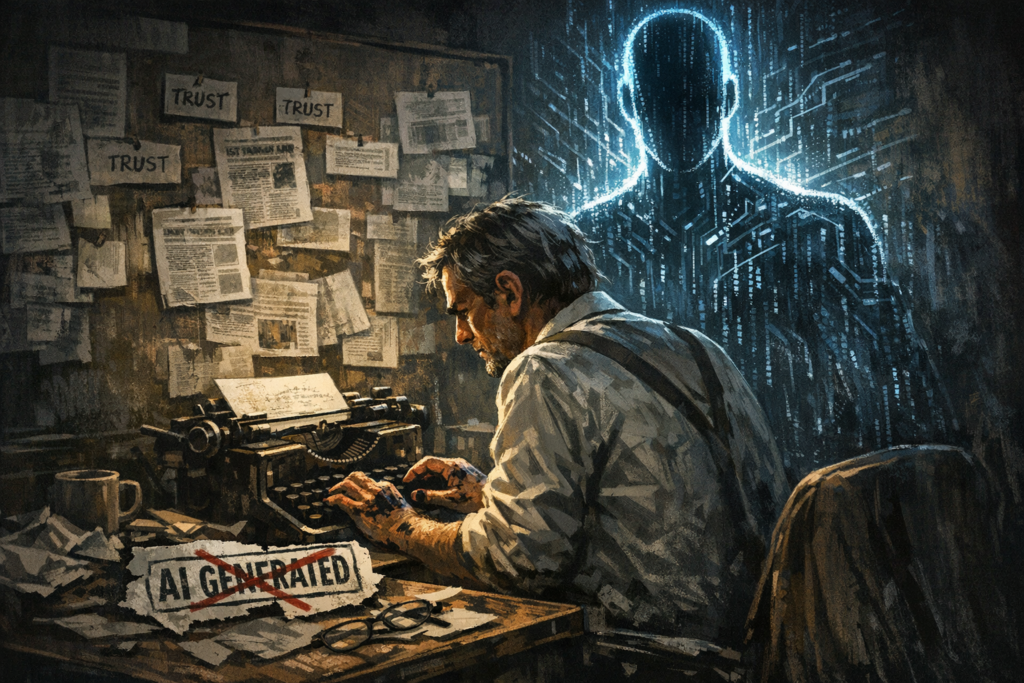

Slow-moving phenomena are notoriously difficult to address. Unlike an algorithmic bias that produces a headline-making failure, cognitive erosion creeps in through daily interactions with helpful interfaces that people quickly learn to trust. The result is a paradox: the same AI that enhances productivity and access may also, over time, undermine the cognitive capacities that made rapid innovation possible in the first place.

Strategic context: companies, investors and the premium on cognitive capital

Firms building AI platforms face a complex calculus. On one hand, creating powerful assistance increases product stickiness and engagement. On the other, there is potential long-term damage to the human capital that companies rely on—employees whose judgment, creativity, and resilience are essential for innovation.

Investors and executives should note three dynamics:

- Short-term productivity gains can mask long-term depreciation of critical workforce skills.

- Products that promote passive use (complete automation of tasks) may be profitable but socially unsustainable.

- There’s a market opportunity for tools that explicitly preserve or augment human cognition rather than replace it.

These dynamics will reshape competition. Companies that integrate “cognitive-preserving” design—features that encourage reflection, spaced recall, or mastery learning—could create a durable advantage by aligning user well-being with business goals. Educational tech, professional platforms, and enterprise AI that prioritize human-AI collaboration will likely win trust and regulatory favor.

Design trade-offs: convenience versus cognitive fitness

Designers face ethical and product decisions reminiscent of debates in nutrition and urban planning: what do you make easy, and what do you deliberately make slightly harder to promote healthier long-term outcomes? Choices matter. An app that writes a first draft for you may increase throughput today but reduce practice at structuring arguments tomorrow. An autonomous navigation feature that hides route complexity saves time but diminishes spatial reasoning skills over repeated use.

Practical design interventions can mitigate risks without rejecting AI’s benefits. Examples include:

- Progressive assistance: start with hints, not answers, and increase automation as mastery grows.

- Delayed auto-complete: require a brief pause or confirmation, prompting users to generate initial responses.

- Active recall features: integrated prompts that periodically ask users to recall or explain rather than click to retrieve.

- Transparent suggestions: show the reasoning or provenance behind a recommendation to preserve evaluative engagement.

Policy and regulatory implications: beyond data privacy

Conversations about AI regulation often focus on safety, privacy, and bias. Cognitive impact demands a new axis of policy attention. Public health departments, education ministries, and labor regulators may need to consider frameworks that protect and cultivate collective cognitive resilience.

Possible policy levers include:

- Standards for cognitive safety: guidelines for product features that demonstrably preserve cognitive skills in long-term use.

- Workplace norms: requirements for “human-in-the-loop” responsibilities in critical decision processes to prevent deskilling.

- Educational curricula: training students to use AI as a partner while continuing to build core cognitive skills like reasoning and memory.

- Disclosure rules: labeling tools that markedly automate thought processes so users can make informed choices.

These measures carry trade-offs. Overregulation could stifle innovation; under-regulation could produce societal costs that are diffuse and deferred. The challenge is to calibrate policy to encourage beneficial AI that augments rather than erodes human cognitive capacities.

Business consequences and competitive dynamics

Organizations that fail to grapple with cognitive impacts risk several outcomes. In knowledge-intensive industries, an over-automated workforce may show reduced creativity, weaker analytical reasoning, and lower resilience in dealing with novel problems—traits critical for long-term competitiveness. Conversely, companies that invest in cognitive-preserving training and product design may see sustained innovation rates and higher employee retention.

Startups and incumbents will bifurcate along philosophical lines. One camp will sell maximum automation and convenience; another will position AI as a capability amplifier—tools that scaffold human skills, require active engagement, and track competency growth. The latter approach may attract customers with long horizons: universities, professional services, healthcare and government.

Three plausible trajectories

Which future unfolds depends on interactions among technology, market incentives, and policy. Consider three scenarios:

1. Seamless automation, slow cognitive decline

Market forces continue to reward frictionless automation. Productivity and GDP figures rise, but average performance on cognitive benchmarks drifts downward over decades. Society adapts: new roles emphasize oversight and emotional intelligence, while routine cognitive tasks migrate to machines. The burden falls on educational systems to remediate basic skills.

2. Balanced augmentation

Design norms and light-touch regulation promote human-AI symbiosis. Products incorporate features that maintain or improve cognitive skills. Employers and educators prioritize hybrid capabilities. The economy benefits from both high productivity and resilient, adaptable workers.

3. Cognitive renaissance

A counterintuitive path: recognition of erosion sparks a movement to reclaim cognitive practices. Investments in mental training, curricula reforms, and “cognitive-first” product categories lead to an upswing in human capabilities alongside advanced AI—resulting in a virtuous cycle where AI is explicitly used to train, not replace, human thought.

What leaders and individuals can do now

There’s no single cure, but pragmatic steps can blunt risks and capture opportunities.

- For product teams: build metrics that track user learning and engagement with critical thinking features, not just click-throughs.

- For HR and executives: design workflows that preserve decision ownership and rotate tasks to keep skills sharp.

- For educators: teach meta-cognitive strategies—how to learn with AI, not from it—plus verification and source-evaluation skills.

- For individuals: practice deliberate use—set bounds on when you rely on AI, and schedule “off-AI” exercises that challenge memory and reasoning.

These are tactical moves that, multiplied across millions of interactions, can reverse or slow erosive trends.

A forward-looking reflection

The integration of AI into daily life is not destiny written by algorithms alone; it is a socio-technical negotiation. The incremental erosion described by recent studies is real but not inevitable. Choices made by designers, managers, policymakers and everyday users will determine whether AI becomes an anesthetic for human cognition or a tonic that helps minds adapt and grow.

The smarter path is not to reject automation, but to design a future where artificial intelligence amplifies, disciplines and expands human thought. That requires honesty about trade-offs, investment in cognitive-preserving design, and public conversations about what kinds of thinking we value. If we get those elements right, AI will not be the slow erosion of our cognitive heritage but the scaffolding for a new era of human ingenuity.