The announcement that the leader of a major U.S. public hospital intends to substitute radiologists with artificial intelligence has jolted conversations across health systems, vendor boardrooms, and radiology reading rooms. At first blush it reads like a headline engineered to provoke: a blunt solution to rising costs and growing imaging volumes. But beneath the provocation lie real pressures driving hospitals toward automated image interpretation, along with technical limits, regulatory hurdles, and profound workforce implications that will determine whether such a plan becomes a blueprint or a cautionary tale.

Why a hospital CEO would float replacing radiologists

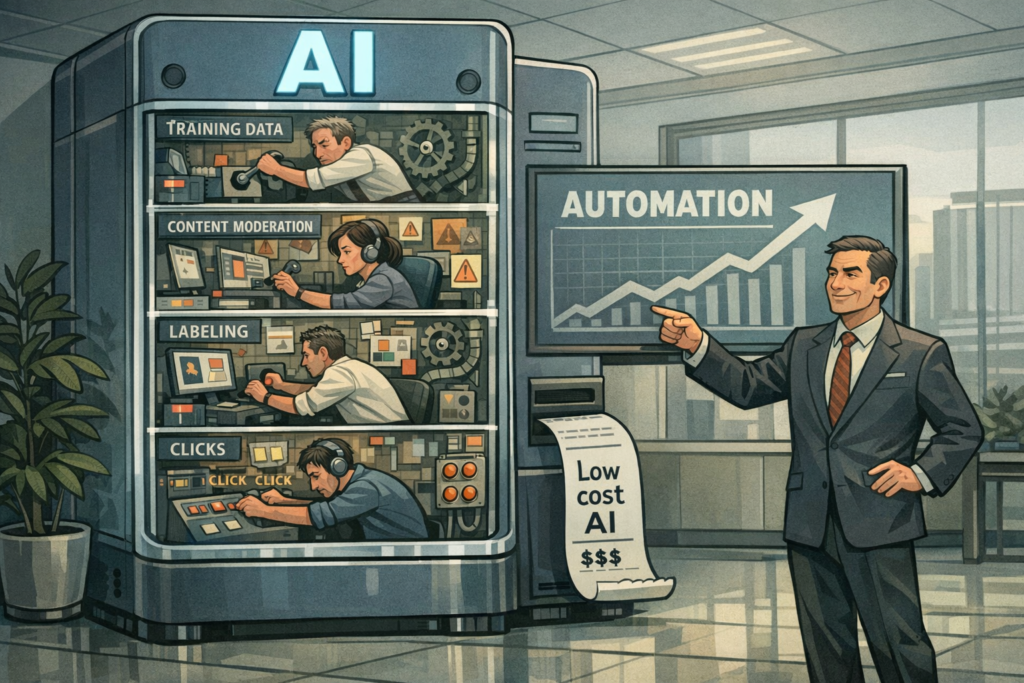

Health care delivery is being squeezed from multiple directions: shrinking margins, growing imaging demand, and uneven access to subspecialty expertise in smaller or safety-net facilities. Advanced chest CTs, trauma series, and routine X-rays flood emergency departments at night and on weekends; hiring around-the-clock radiology coverage is expensive and sometimes impossible in underserved areas. Meanwhile, dozens of AI algorithms have gained regulatory clearance to detect specific pathologies — pulmonary embolus, intracranial hemorrhage, pneumothorax — and vendors pitch rapid triage as a way to prioritize workflow and reduce diagnostic delays.

In that context, a CEO may see AI as a lever to lower costs, reduce turnaround times, and expand apparent coverage without the salary and benefit expenses of additional radiologists. There’s also a symbolic component: positioning the hospital as aggressive and innovative in adopting technology that promises efficiency gains. But the rhetoric of “replace” conflates several different technical and operational possibilities.

Replacement, augmentation, or redistribution?

When leaders talk about replacing clinicians with AI they often mean one of three models:

- Augmentation: AI triages studies and highlights abnormalities while radiologists make the final interpretation — an efficiency-first model that preserves human oversight.

- Task redistribution: Low-complexity cases (e.g., normal chest X-rays) are handled by AI as a first read, with radiologists intervening only for flagged or complex studies.

- Full automation: Algorithms provide definitive reads and reports without human sign-off — the most disruptive and regulatory-challenged approach.

Each model carries different risk profiles and operational requirements. The CEO’s public language may be emphatic, but operational reality — liability, accreditation, clinician acceptance, and algorithmic limits — tends to push institutions toward augmentation and redistribution rather than outright elimination.

Technical and clinical limits that complicate wholesale replacement

State-of-the-art imaging AI has made impressive progress, but there are important caveats. Many algorithms perform well on specific, narrowly defined tasks under controlled conditions. Translating that performance into reliable, broad clinical practice is hard.

- Generalization and dataset shift: AI trained on one population or scanner vendor may underperform on images from different hospitals or patient demographics.

- Edge cases and rare disease: AI struggles where training examples are scant — uncommon pathologies, complicated post-operative anatomy, or subtle presentations that require integrative clinical judgment.

- Explainability and trust: Clinicians and patients want to know why a decision was made. Many deep learning models remain opaque, which hinders trust and clinical adoption.

- Adversarial and practical failure modes: Small changes in acquisition or image quality can alter algorithm outputs; robust clinical deployment requires extensive monitoring and fail-safes.

These limitations underline why radiologists are not merely pattern-recognition engines. They synthesize history, prior imaging, lab results, and clinician queries — functions that current AI systems can only partially address.

Regulatory, legal, and reimbursement hurdles

Even confident executives face a regulatory environment that is not yet designed for unmanned diagnostic decisions. In the U.S., the FDA clears or approves algorithms for specific intended uses, but clearance is not equivalent to green-lighting unsupervised clinical automation. Professional societies and state medical boards have voiced expectations that a qualified professional be responsible for diagnostic decisions. Malpractice frameworks remain unsettled: if an AI misses a critical finding, is the vendor, the hospital, or the absent human clinician liable?

Reimbursement is also a lever. Current payment models typically assume clinician interpretation. Payers and Medicare have started to recognize digital diagnostics and imaging AI in narrow cases, but broad coverage policies that would reimburse hospital costs associated with automated reads are not yet widespread. Without reimbursement alignment, financial incentives for radical substitution are weaker than they appear.

Industry dynamics: vendors, incumbents, and new entrants

The market for medical imaging AI is crowded and consolidating. Large tech firms, specialized startups, and imaging vendors all compete to own the workflow layer in radiology. The companies that succeed will be those who can embed robust models into PACS and EHR systems, provide continuous model monitoring, manage regulatory compliance, and demonstrate cost-effective clinical outcomes.

Hospitals will have choices: license algorithms from multiple vendors and orchestrate them internally, partner with cloud-based platforms that promise end-to-end services, or develop in-house models if they have sufficient data science resources. Public systems with limited budgets may be tempted by lower-cost or open-source options, but those choices bring operational and governance burdens, including maintaining retraining pipelines and ensuring data privacy.

Human factors: workforce transition and new roles

An immediate consequence of serious adoption will be role redefinition rather than wholesale displacement. Radiology will likely evolve along familiar automation tracks: AI takes over repetitive or low-value tasks, while humans concentrate on complex, integrative, and value-adding work. That transition creates opportunities and friction.

Possible new or expanded roles include:

- AI oversight radiologist — responsible for algorithm validation, QA, and escalation policies.

- Clinical data stewards — ensuring data quality and governance for continuous model improvement.

- Workflow integrators — engineers and informaticians who stitch together AI outputs with PACS/EHR and reporting systems.

Training and credentialing will be crucial. Radiology residency programs may need to expand AI literacy, informatics, and quality assurance training. Unions and professional bodies will press for negotiated pathways that protect jobs and patient safety while allowing innovation.

Realistic trajectories: scenarios for the next five years

Several plausible futures emerge depending on regulatory responses, vendor maturity, and hospital risk tolerance:

- Measured augmentation (most likely): AI becomes standard as a triage and second-reader tool. Radiologists’ throughput rises, turnaround times fall, and patient outcomes improve in many settings. Human oversight remains the norm.

- Targeted automation in narrow domains: Low-risk, high-volume tasks (e.g., routine follow-up chest X-rays in screening programs) become automate-able and may be handled without immediate sign-off, subject to strict protocols and audits.

- Legal and public backlash slows adoption: High-profile errors or regulatory pushback prompts conservative deployment and stronger human-in-the-loop requirements.

- Radical transformation in safety-net settings: Under-resourced hospitals adopt AI aggressively to fill gaps, but outcomes vary and ethical concerns about two-tier care provoke policy debate.

What leaders should consider now

For hospital executives, technologists, and policy makers the path forward requires balancing ambition with humility. Key priorities:

- Start with pilots that have clear safety nets: Test AI in controlled settings with robust monitoring and rapid rollback options.

- Measure clinical outcomes, not just time-to-read: Improved throughput is valuable only if diagnostic accuracy and patient outcomes are maintained or improved.

- Invest in governance: Data stewardship, performance monitoring, and incident reporting must be institutionalized before scaling.

- Engage clinicians early: Adoption succeeds when radiologists co-design workflows and understand algorithm limits.

- Plan workforce transitions: Upskilling and role redefinition should be funded and negotiated with stakeholders to avoid abrupt displacement.

Final perspective: transformation, not termination

The headline-grabbing idea of replacing radiologists with AI obscures a subtler reality: AI is a powerful lever for reconfiguring imaging services, but it is not a drop-in human replacement anytime soon. The CEO’s statement should be read as an intentional provocation that forces necessary conversations about cost, access, and innovation. Whether it catalyzes responsible experimentation or reckless cost-cutting depends on governance, transparency, and an honest accounting of AI’s limits.

Ultimately, the value of imaging AI will be judged not by a claim to make clinicians obsolete, but by whether it helps health systems deliver faster, fairer, and more accurate care. That requires aligning technical capability with clinical judgment, ethical oversight, and regulatory frameworks that protect patients while allowing hospitals to innovate. In the next chapter of radiology, the most likely outcome is not extinction of the specialty but its reinvention — staffed by clinicians who read harder, manage systems, and steward the algorithms that have become their new colleagues.