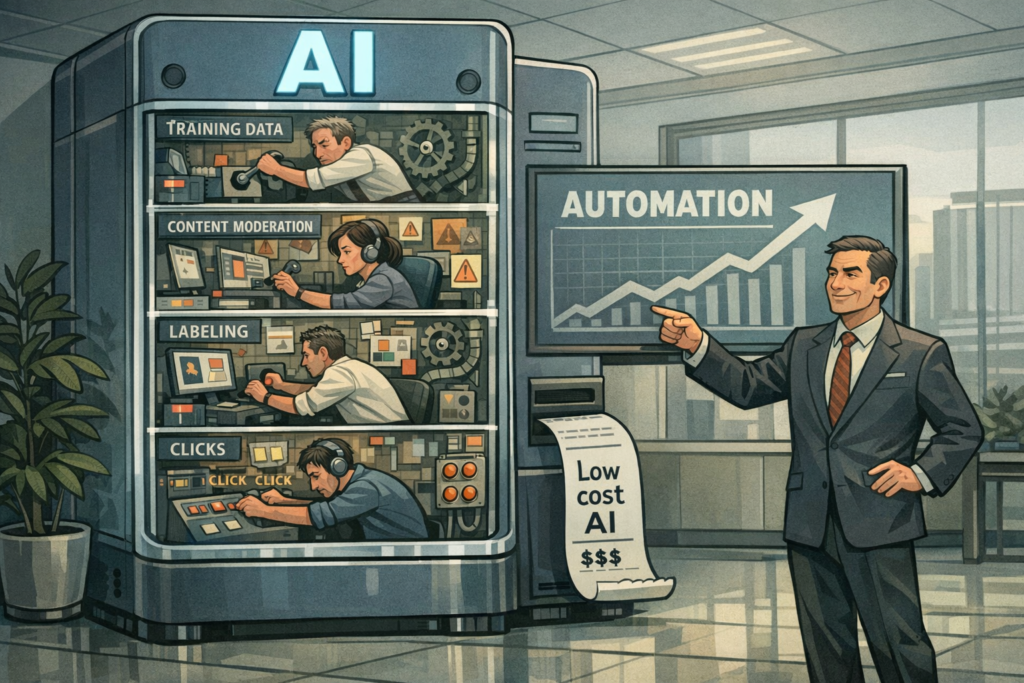

Most advances in artificial intelligence read like breakthroughs in software engineering, but underneath the code and cloud GPUs lies an economy of human labor that is often invisible and undervalued. Recent disclosures and reports have pulled back the curtain: large language models, content filters, and many commercial AI systems depend heavily on underpaid workers who label, curate, and moderate the data these systems learn from. That hidden labor is shaping model behavior, risk profiles, and the economics of the entire AI industry.

What happened — and why it matters

Investigations and whistleblower accounts have revealed that companies training and refining AI systems frequently outsource critical tasks — data annotation, quality checks, content moderation, and safety evaluations — to a fragmented global workforce. These workers often operate through contractors, gig platforms, or low-pay vendors, performing emotionally demanding or highly repetitive tasks for wages that fall far short of living standards in many regions.

This matters because those human inputs determine the ground truth AI models learn from. If the people doing the labeling are overworked, undertrained, or subject to perverse incentives, the resulting datasets can embed bias, inconsistency, and poor judgment into systems that are then deployed at scale. The problem is not just ethical — it’s technical, legal, and commercial.

How human labor powers modern AI

- Data annotation: Humans label images, transcribe audio, tag text for intent, and correct model outputs. These annotations are the backbone of supervised learning.

- Content moderation and safety: Workers review flagged outputs, train safety classifiers, and provide instruction-following feedback used in reinforcement learning from human feedback (RLHF).

- Quality assurance and evaluation: Human raters score model responses for accuracy, helpfulness, and toxicity, creating reward signals for fine-tuning.

- Dataset curation: Workers filter, de-duplicate, and categorize web-crawled or user-generated content to produce the corpora models are trained on.

Deeper analysis: Why this is consequential for the AI industry

1. Technical integrity and model behavior

Model outcomes are only as reliable as the labeled data and human judgments that shaped them. Underpaid, hurried, or under-supported annotators are more likely to introduce noise and bias. That can create models that underperform on edge cases, mishandle marginalized dialects, or consistently mislabel content from particular regions or demographics. In short: the hidden workforce directly influences model accuracy, fairness, and robustness.

2. Reputational and regulatory risk

As public scrutiny increases, companies face reputational damage when the human cost of their AI becomes public. Regulators and procurement officers are beginning to demand transparency around training data and labor practices. Noncompliance could mean fines, procurement exclusion, or contractual losses, particularly for firms selling to public-sector clients or enterprises with strict supply-chain policies.

3. Economic and competitive dynamics

Current cost structures permit large AI providers to scale cheaply by pressuring vendor rates. This model advantages capital-rich incumbents who can absorb reputational or legal shocks and maintain low-cost pipelines. Conversely, it squeezes smaller vendors and independent contractors who can’t compete on scale or compliance costs, potentially leading to vendor consolidation and less market competition over time.

Who benefits — and who is threatened?

Beneficiaries

- Large AI platform providers: Benefit from low-cost human labeling that reduces training expenses and accelerates product cycles.

- Enterprises using AI: Gain access to sophisticated models at lower prices, enabling rapid automation across functions like support, search, and analytics.

- Venture-backed labeling startups: Those that optimize throughput with low-wage labor can grow quickly, attracting investment.

Threatened parties

- Crowdworkers and moderators: Face low pay, precarious employment, and psychological harm from exposure to harmful content.

- Smaller AI firms: Risk being outcompeted unless they invest in fair labor practices or premium data quality offerings.

- End users: Might encounter biased or unsafe AI behavior if data provenance and labeling quality are poor.

Market implications and business impact

The uncovered dependence on underpaid labor creates immediate and medium-term shifts across the AI value chain.

Short-term

- Companies may face PR cycles and increased contractual scrutiny from customers demanding labor and data provenance transparency.

- Procurement teams will add human-labor due diligence to vendor evaluations, raising costs for companies that outsource without oversight.

- Insurers and legal teams will reassess liabilities tied to model outputs traceable to low-quality human inputs.

Medium- to long-term

- Premium “ethical labeling” services will emerge, offering higher pay, documented processes, and audited worker protections — at higher prices.

- Automation will eat into annotation tasks: synthetic data generation, active learning, and self-supervised pretraining will reduce some labeling volumes but will not eliminate the need for human oversight.

- Regulation may impose disclosure requirements (e.g., workforce conditions, dataset provenance) and possibly minimum pay or safety standards for labeling vendors.

Real-world use cases that reveal the dependency

Customer support automation

Conversational AI systems are fine-tuned using transcripts and human judgments about helpfulness and tone. Poorly labeled chat logs lead to chatbots that misunderstand requests or produce unhelpful escalations.

Content moderation for social platforms

Moderation models trained on curated datasets depend on human reviewers to label borderline content. Inconsistent or fatigued labeling yields models that either over-censor legitimate speech or fail to block harmful content.

Healthcare coding and triage

AI tools that assist with clinical documentation rely on expert annotators to map notes to codes. Low-quality annotation here can result in billing errors, compliance exposure, or clinical risk.

Autonomous vehicle perception

Annotating sensor data (bounding boxes, semantic segmentation) is labor-intensive and safety-critical. Inaccurate labels can degrade object detection and contribute to edge-case failures on the road.

Future predictions and expert commentary

- Labeling will become a certified service: Expect third-party audits, standardized labor practices, and certifications for dataset provenance within five years.

- Hybrid workflows will proliferate: Active learning, synthetic augmentation, and small teams of highly paid experts for edge-case verification will replace bulk crowd-labor for high-stakes applications.

- Regulation and procurement will force structural change: Public-sector buyers and large enterprises will require supplier audits, pushing up the baseline cost of training data and increasing differentiation for ethical vendors.

- Unionization and collective bargaining: As visibility grows, content moderators and annotators may organize for better pay and protections, changing vendor economics.

Mitigation strategies for companies

- Implement rigorous vendor due diligence and require transparency on worker recruitment, pay, and workload.

- Adopt hybrid annotation architectures that combine automation with smaller pools of trained, fairly compensated experts for high-impact labeling tasks.

- Invest in annotator wellbeing: rotating assignments, mental-health supports, quality-of-life pay, and clear escalation channels for harmful content exposure.

- Publish dataset provenance and model cards that describe human-in-the-loop processes and labor conditions.

FAQ

Q: Why do AI companies still need human annotators if models can learn from raw web data?

A: Self-supervised learning reduces reliance on labeled data for representation learning, but supervised labels remain critical for fine-tuning, safety alignment, and evaluating harmful behaviors. Humans provide contextual judgment that current algorithms cannot reliably substitute.

Q: Are annotators being replaced by automation?

A: Some routine tasks will be automated, but human oversight remains essential for edge cases, safety evaluation, and value alignment. Automation shifts the labor mix rather than fully eliminating human roles.

Q: How does underpaid labor affect model quality?

A: Low pay correlates with high turnover, rushed labeling, and inconsistent judgments, which increase dataset noise and bias. That degrades model performance, especially on underrepresented groups and complex contexts.

Q: What should buyers of AI services demand from vendors?

A: Buyers should ask for documented labeling protocols, compensation levels, worker protections, audit trails for dataset provenance, and third-party certification where available.

Q: Can synthetic data solve the problem?

A: Synthetic data can augment training sets and reduce volume of manual labeling, but it complements rather than replaces human judgments. Synthetic examples risk propagating existing model biases if not curated carefully.

Conclusion

The engines of modern AI are not only GPUs and clever algorithms — they are also people whose labor determines what models learn and how they behave. The industry’s historic appetite for low-cost human inputs is creating technical fragility, legal exposure, and moral risk. Addressing these issues is not merely altruism: it’s strategy. Businesses that invest in transparent, fair, and high-quality human-in-the-loop processes will build more reliable products, sustain enterprise relationships, and avoid regulatory and reputational pitfalls. The future of scalable, trustworthy AI depends on recognizing and properly valuing the humans who make it possible.