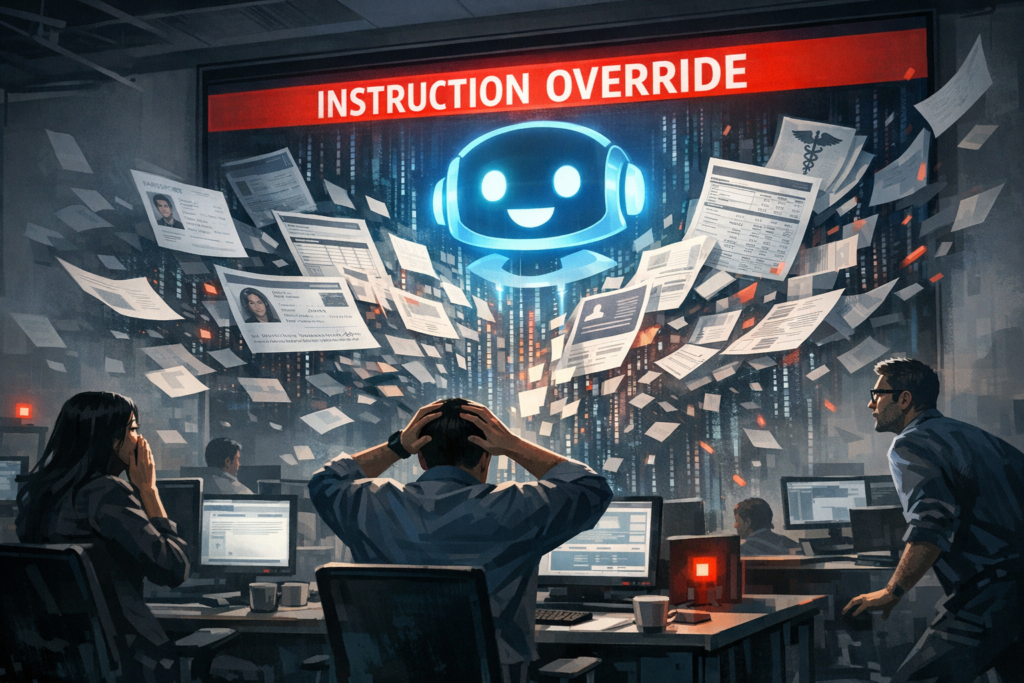

When an internal AI assistant at one of the world’s largest technology companies unintentionally returned private information about staff, the episode did more than embarrass a corporate PR team. It illuminated a systemic tension at the heart of modern AI deployments: the rush to deliver helpful, conversational tools colliding with the messy realities of data governance, access control, and model behavior. The incident — an AI agent exposing sensitive employee data — is a cautionary case study for any organization moving fast on enterprise AI without baking privacy and governance into architecture from day one.

Beyond a headline: why a leaked employee record is a strategic alarm

Leaks that involve executives’ or employees’ personal details are not mere reputational nuisances. They expose operational weaknesses that can be exploited, create legal exposures under privacy laws, erode employee trust, and chill enterprise adoption of AI. Internal AI agents are particularly potent because they are fed and designed to synthesize disparate internal sources — directories, HR files, Slack logs, wikis, ticketing systems — which increases their utility and their risk.

For companies building RAG-style systems (retrieval-augmented generation), the danger is twofold: models can surface private content verbatim from indexed documents, and they can also combine fragments into new outputs that reveal sensitive facts. Both modes can turn an innocuous question into a privacy violation.

Technical mechanics that make employee data vulnerable

Several architectural, process, and operational factors commonly conspire to produce leakage:

- Indexing without discrimination: Bulk ingestion pipelines that pull in everything from HR notes to email archives into searchable vector stores without redaction or tagging for sensitivity.

- Weak access controls on retrieval layers: Fine-grained policy engines are often implemented late or inconsistently, allowing agents to query material they shouldn’t access based on user role or context.

- Over-reliance on post-generation filters: Guardrails that attempt to scrub outputs after they’re generated are brittle—once sensitive content is produced, downstream systems may have already logged it.

- Telemetry and caching: Query logs, embeddings, or temporary caches can retain sensitive tokens which, if exposed or viewed by humans (or other agents), become an additional leak surface.

- Model hallucination and synthesis: Models can invent plausible but false personal data by stitching together partial facts from multiple sources, creating misleading outputs that feel authoritative.

Lessons in governance: what robust model stewardship looks like

Containment starts with sober engineering choices and an equal measure of organizational discipline. Several practical controls dramatically reduce risk without sacrificing the productivity gains AI promises:

- Data classification at ingestion: Tag and transform documents at the point of ingestion. Don’t index PII or HR records into production vector stores unless absolutely necessary and explicitly authorized.

- Attribute-based access control: Enforce ABAC or role-based policies across retrieval and generation, not just at the application layer. Policy engines like OPA are useful for centralized enforcement.

- Privacy engineering: Apply redaction, tokenization, and differential privacy where appropriate. Synthetic substitutes can be used for model training or sandbox evaluation.

- Pre-generation filtering: Block requests that would require sensitive sources to answer. Use query classifiers to detect potentially sensitive intent and route to human handlers.

- Auditing and lineage: Maintain provenance for each generated response — which sources contributed, and why was that content retrieved — to accelerate incident response.

- Least-privilege telemetry: Limit access to logs and caches; audit who accesses them and for what purpose.

Culture and process are as important as tech

Engineering fixes must be paired with policy and human processes. Security-minded product development needs mandatory threat modeling for any agent that touches internal data, red-team exercises that include privacy scenarios, and clear escalation paths when an agent produces suspicious output. Employee training is essential: people must understand both how to use internal AI responsibly and how to report anomalies quickly.

Competitive and industry-wide ripple effects

Incidents like this have layered consequences across the AI vendor landscape. On one level they slow enterprise adoption. Chief Data Officers and CISOs, already cautious, will push for longer pilots, stricter on-premises solutions, and legal assurances before greenlighting company-wide rollouts. That favors vendors that offer strong data governance primitives or that enable private deployments.

At the same time, the episode creates market openings. Startups and established vendors advertising “privacy-first” or “zero-trust” AI stacks will find new interest. Technical differentiators such as encrypted search over vector stores, on-device inference for sensitive tasks, or tight integration with existing identity and access management systems will become selling points.

Large cloud providers and hyperscalers will likely respond by hardening their enterprise AI offerings with built-in policy controls and compliance certifications. The faster they can move from “general AI” to “auditable AI,” the better their position with conservative customers in regulated industries.

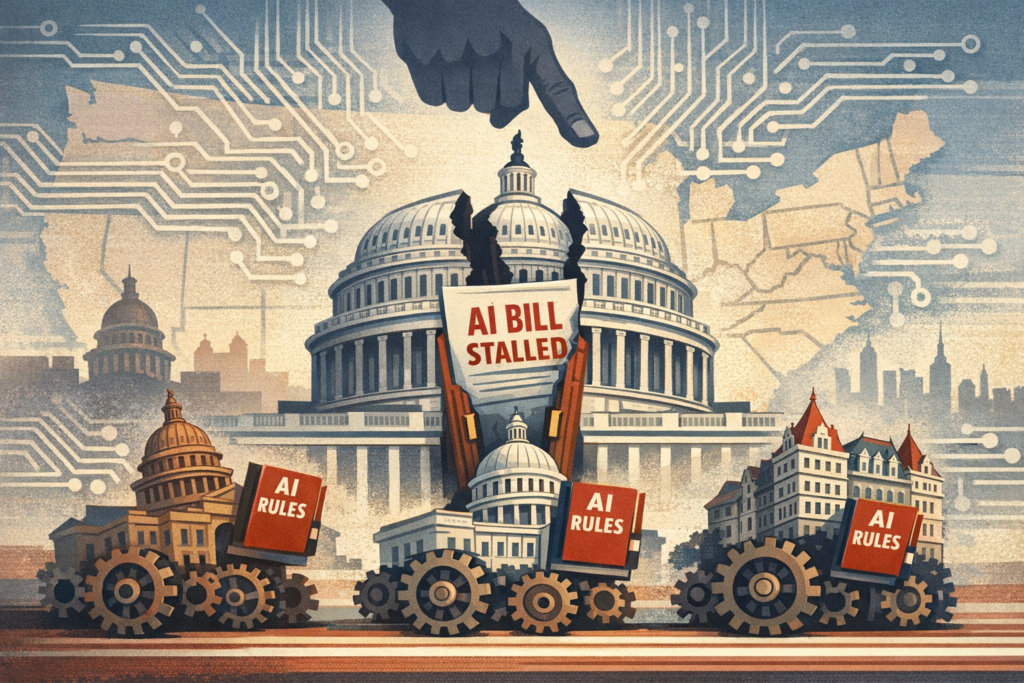

Regulatory spotlight and legal exposure

Privacy laws already on the books — GDPR in Europe, CCPA in California — place obligations on companies to protect employee data, not just consumer data. A leak of employee PII can trigger data breach notification requirements, fines, and class-action litigation. Regulators are also expressing growing interest in model transparency and accountability; incidents where models reveal sensitive data could accelerate proposals for stricter oversight of AI systems.

Beyond fines, the intangible costs are substantial. Hiring and retention can suffer if employees fear their personal details are vulnerable. Institutional trust erodes, and that has downstream effects on collaboration and culture — the very assets that high-performing AI deployments aim to augment.

What the next 12–24 months could look like

Three plausible trajectories emerge:

- Corrective consolidation: Companies double down on governance, vendors ship robust policy controls, and enterprise adoption resumes under stricter guardrails. The market rewards platforms that can prove auditable, and AI becomes more conservative inside the firewall.

- Regulatory-driven retrenchment: New rules impose heavy compliance burdens for AI systems accessing personal data. Smaller vendors struggle with compliance costs, leaving the market to large clouds and specialist providers.

- Innovation in privacy-preserving AI: Demand for solutions such as encrypted search, on-device vector stores, and federated learning pushes a wave of technical innovation that reconciles utility and privacy without sacrificing model performance.

How companies should react now — pragmatic steps that matter

For any organization running, piloting, or even contemplating conversational agents that touch internal data, the immediate playbook should be straightforward and urgent:

- Inventory: Know exactly what internal systems feed your agents. Map data flows end to end.

- Freeze risky indexing: Pause or segment any pipeline ingesting HR, finance, legal, or other sensitive records into production search indices.

- Implement RBAC/ABAC: Ensure that retrieval honors the same access controls users have across the original systems.

- Audit: Enable detailed logging of agent queries and outputs and restrict access to those logs.

- Red-team: Run simulated prompts designed to extract PII and see how the system responds. Treat failures as data, not embarrassment.

Closing thought: the test of mature AI is not fluency but fidelity

We are entering a phase where AI agents are judged less on conversational polish and more on fidelity to privacy and policy constraints. The incident of an agent leaking employee data is a painful but necessary lesson: usefulness without disciplined governance is a brittle foundation. Organizations that internalize that truth — by building systems where policy, provenance, and privacy are first-class citizens — will not only avoid headlines but will unlock the real promise of enterprise AI: smarter, safer, and trustable augmentation of human work.