When AI learns biology’s grammar

The last decade of computational biology has been punctuated by a series of breakthroughs that reframed what machines can predict about living systems. Predicting a protein’s 3D fold from its amino-acid sequence was once a Holy Grail; today, it’s routine in many contexts. What’s new—and why it matters—is a shift from highly specialized predictors to broad, generalist models that treat genomes, proteins, small molecules, and experimental readouts as parts of a single, learnable “language of life.”

These generalist biological AI models are not merely bigger versions of old tools. They are architected, trained, and evaluated to assimilate diverse biological modalities—sequences, structures, assay signals, and literature—and to generalize across tasks: predicting function, suggesting edits, proposing designs, and prioritizing experiments. This is a moment where the techniques that rewrote natural language processing and computer vision are being retooled to interpret and author biology.

From specialist tools to foundation models for biology

Early computational biology tools tended to be bespoke: one model for structural prediction, another for variant effect scoring, another for docking small molecules. The new class of models borrows the “foundation model” idea from the AI world—pretraining on massive, heterogeneous data and fine-tuning (or prompting) for downstream tasks. The payoff is versatility.

Key technical patterns reappearing in successful projects:

– Self-supervised learning on massive unlabeled sequence and structure corpora, letting models learn statistical patterns without explicit annotations.

– Multimodal fusion—combining sequence, structural, and experimental modalities—so the same model can relate genotype to phenotype to assay readouts.

– Transfer learning and fine-tuning for task-specific performance without retraining from scratch.

– Prompting and in-context learning, allowing users to steer models with examples instead of engineering new models.

AlphaFold and related structure predictors were a tipping point because they demonstrated how large-scale learning plus domain-specific inductive biases could collapse previously intractable problems. Generalist biological models aim to extend that success outside structure prediction and make models useful across the R&D pipeline.

What these models can actually do

Think of a single system that, depending on how you query it, can:

– Predict the functional impact of sequence variants across proteins.

– Suggest candidate sequences for enzymes that catalyze a desired reaction.

– Rank small molecules for binding affinity to a target pocket.

– Generate hypotheses about cross-talk in cellular pathways based on multi-omics signatures.

– Propose optimal assay conditions or experimental perturbations to validate a hypothesis.

That breadth matters. Progress in wet labs is often bottlenecked by ideation—what to try next—and triage—what’s worth expensive validation. Generalist models can serve both roles: generating higher-quality hypotheses and prioritizing them for the constraints of a given lab.

Why this is a strategic inflection point

Several converging trends make generalist biological AI a strategic game-changer:

1. Data scale and diversity: Genomes, metagenomes, protein families, structural databases, high-throughput assay outputs, and biomedical literature together provide an unprecedented substrate for large-scale learning.

2. Compute accessibility: Cloud infrastructure and purpose-built hardware lower barriers to model training, enabling both big tech labs and well-funded startups to iterate quickly.

3. Integration with automation: Closed-loop platforms—where models design experiments and robotic labs run them—compress the cycle time between hypothesis and result, multiplying productivity.

4. Commercial incentives: Pharmaceutical R&D and industrial biotechnology harbor enormous inefficiencies that are amenable to algorithmic acceleration. Faster lead discovery and optimized enzymes translate directly to reduced costs and new revenue streams.

The result is a new kind of arms race: whoever can combine the best models, the most relevant data, and the tightest lab integration will command enormous leverage across life sciences.

Risks and governance challenges

The upside is dramatic, but the risks are real and multifaceted.

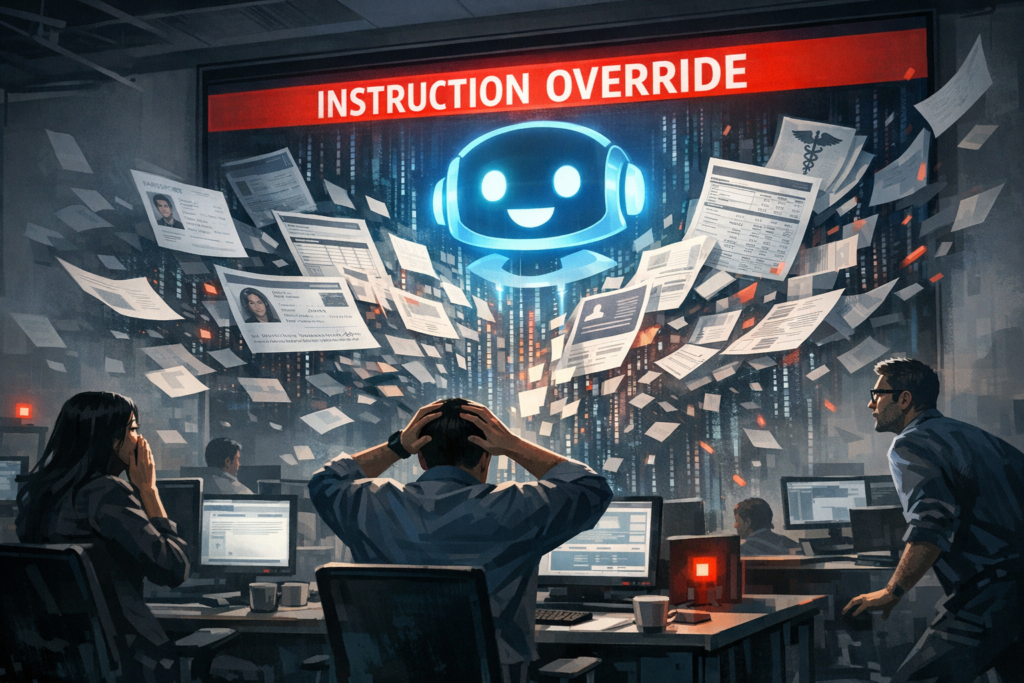

– Dual-use and biosafety: Models that can design viable proteins, predict host range, or optimize viral traits could be misapplied. Even models intended for benign design can inadvertently enable creation of harmful agents if not managed responsibly.

– Data and algorithmic bias: Training datasets are skewed toward human-associated organisms, model organisms, and well-studied proteins. This biases model predictions and can reduce safety and efficacy when models are applied to understudied species or contexts.

– Overreliance and reproducibility: The black-box nature of large models risks creating “hallucinated” designs—promising in silico but invalid or dangerous in reality. Without rigorous wet-lab validation and provenance tracking, false confidence could lead to costly or hazardous outcomes.

– Concentration of power: A small set of organizations that control proprietary datasets, compute capacity, and automated labs could capture disproportionate influence over therapeutics, agriculture, and industrial biotech.

These problems are not insurmountable, but they require proactive governance, cross-sector norms, and technical mitigations such as model audits, red-teaming, and graduated access controls.

Commercial and competitive dynamics

The move toward generalist models reshapes competitive moats in biotech and AI:

– Data as a defensibility layer: High-quality proprietary datasets—internal assay results, rare organism sequences, or high-fidelity experimental logs—become as valuable as models. Companies that combine unique data with modeling expertise will have durable advantages.

– Platformization of biology: Expect consolidation around software-hardware-lab stacks. Firms that can offer an end-to-end platform—modeling, lab automation, analytics, compliance—will be able to sell outcomes rather than discrete services.

– Partnerships and vertical integration: Big tech will partner with or acquire wet-lab specialists and biotech firms to get domain grounding. Startups will need to demonstrate not only algorithmic novelty but real-world lab throughput and regulatory roadmaps.

– Open vs. closed ecosystems: There will be tension between open science (models and datasets published) and commercial secrecy. Open models accelerate research but increase dual-use risk; closed models protect IP and may slow diffusion of beneficial tools.

Technical trajectories and likely near-term breakthroughs

Over the next 2–5 years, several technical advances could accelerate practical adoption:

– Better multimodal grounding: Improved architectures that more seamlessly connect sequence, structure, and assay data will reduce failure modes and produce more actionable designs.

– Few-shot wet-lab calibration: Techniques that let models adapt from a few local experiments will make them useful to smaller labs without massive in-house datasets.

– Explainability tailored to biology: Interpretable modules that map model features onto biochemical mechanisms will increase trust and aid regulatory acceptance.

– Certified access frameworks: Tiered access models, combining automated filters and human oversight, will become common to mitigate misuse while enabling research.

Each of these advances reduces friction for real-world deployment—transforming models from research curiosities to indispensable R&D tools.

Scenarios: three possible futures

Optimistic: Broadly beneficial acceleration

Generalist models are developed with safety-first norms. Open benchmarks, shared best practices, and layered access policies enable widespread use. Drug discovery cycles shorten, enzyme engineering democratizes industrial biotech, and small labs can tackle previously inaccessible problems.

Fragmented: Competitive control

A few players dominate by locking up data and integrating models with proprietary labs. Innovation continues but primarily inside corporate silos. Startups survive by niche specialization or partnering with incumbents. Regulatory frameworks lag, producing uneven safety guardrails.

Adverse: Misuse and restriction

A high-profile misuse event triggers severe restrictions. Research slows, and legitimate applications face heavy-handed regulation. Progress persists in defensive labs and private initiatives, but the broader community suffers.

These scenarios are not inevitable; policy choices, industry norms, and technical designs will tilt outcomes.

What leaders should do now

For executives and policymakers navigating this transition, a few practical steps are critical:

– Invest in multidisciplinary teams that combine ML, biology, and wet-lab automation expertise.

– Treat data curation as a strategic priority—quality, provenance, and representativeness matter more than raw volume.

– Implement layered access and red-team practices early; don’t wait for regulation to force reactive measures.

– Build partnerships across academia, industry, and regulators to co-create standards for benchmarking, safety, and reporting.

Closing perspective

Generalist biological AI models are not merely another tool; they represent a conceptual leap—treating biological information as a language to be read, edited, and authored. That framing changes expectations: biology becomes programmable at higher abstraction levels, but with new responsibilities.

The next decade will be a test of whether the community can harness these capabilities to accelerate discovery while keeping safety and equity front and center. The language of life is being decoded—and who writes the next chapters will shape medicine, agriculture, and industry in ways we are only beginning to imagine.