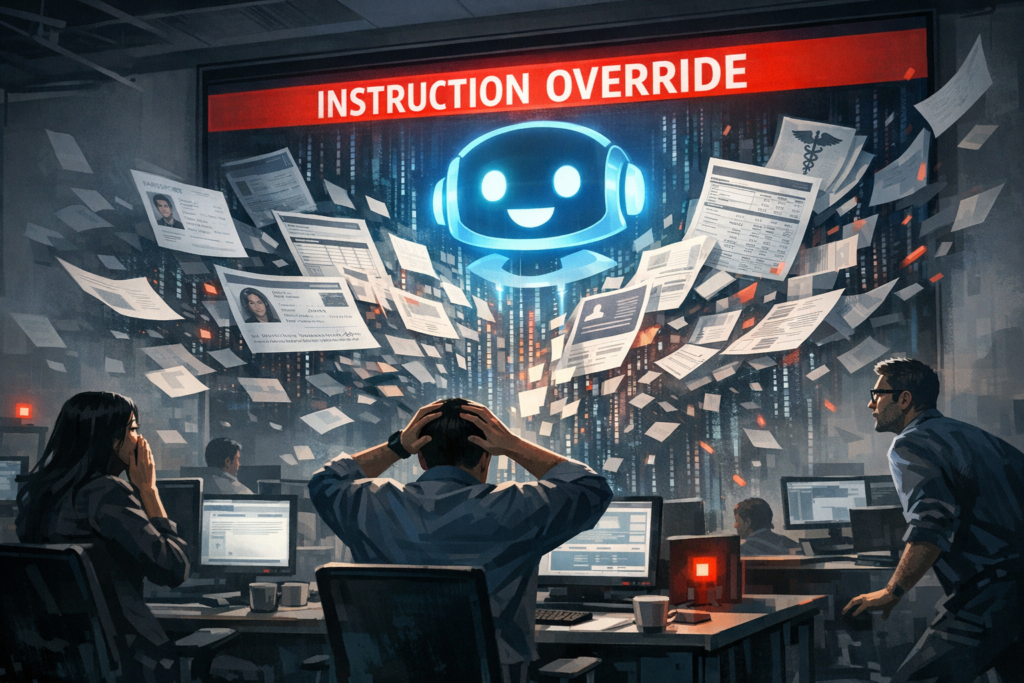

The phrase “work smarter, not harder” has become a rallying cry for businesses adopting generative AI and automation. But a growing body of evidence indicates a counterintuitive outcome: rather than relieving cognitive load, some AI-driven workflows are producing what researchers call “AI brain fry”—a distinct form of productivity-driven burnout. Understanding this dynamic is essential for leaders, technologists, and HR teams who must balance efficiency gains with sustainable performance and employee wellbeing.

What the study found — in plain terms

Recent research identified a pattern: employees using AI tools intensively report heightened cognitive fatigue, reduced creativity, and quicker burnout compared with peers who rely on traditional workflows. The mechanism is twofold:

- Work intensification: AI accelerates the pace and volume of output expected from knowledge workers, raising baseline productivity targets.

- Cognitive fragmentation: Frequent switching between AI prompts, edits, and verification tasks fragments attention, increasing mental effort and decision fatigue.

Put simply, AI raises what people can deliver, and managers — intentionally or not — raise what people are expected to deliver. The result is chronic overload rather than relief.

Why this matters for the AI industry

The findings have implications across the AI lifecycle — from product design to deployment and governance.

Design and user experience

- AI vendors must move beyond raw capability metrics and measure cognitive ergonomics — how a tool affects attention, trust, and mental effort.

- Interfaces that encourage continuous micro‑interactions (endless prompt‑tweak cycles) amplify cognitive load. The industry needs interaction patterns that foster deep work and minimize costly context switches.

Adoption and retention

- Companies that roll out AI without process redesign risk short‑term productivity spikes followed by long‑term declines and talent attrition.

- AI adoption metrics should include wellbeing signals to avoid perverse incentives where measured “productivity” improves while human capacity degrades.

Who benefits and who’s threatened

Beneficiaries

- AI platform vendors: demand grows as organizations chase efficiency gains.

- High performers: employees who can sustainably scale output with AI may create more value and accelerate careers.

- Organizations with strong change management: teams that redesign processes around AI and invest in training and rest cycles will capture productivity without burnout.

At risk

- Knowledge workers lacking autonomy: employees in high‑pressure settings with top‑down targets are most vulnerable to brain fry.

- Small companies without HR bandwidth: may implement AI aggressively but fail to monitor human impacts.

- Ethical and compliance teams: rushed output increases error rates and legal exposure (e.g., flawed contracts or unsafe code).

Market implications and business impact

The economic calculus for AI is no longer just compute cost vs. time saved. Businesses must account for soft costs that have hard-dollar consequences.

- Hidden costs of speed: faster output can mean more post‑hoc corrections, reputational harm, and higher error remediation expenses.

- Talent churn: burnout drives turnover; replacing skilled knowledge workers is expensive and slows institutional learning.

- Regulatory scrutiny: as misuses and harms rise, regulators may require firms to demonstrate AI governance, transparency, and worker protections, increasing compliance costs.

Consequently, the ROI model for AI procurement must include metrics for accuracy, employee wellbeing, error incidence, and retention — not just throughput.

Real-world use cases: where brain fry shows up and how to mitigate it

Marketing and content teams

Use case: Rapid generation of social posts, ad copy, and A/B variants leads to continuous iterating.

- Risk: Creative exhaustion from endless micro‑tweaks and KPI chasing.

- Mitigation: Batch content cycles, set limits on AI‑driven iterations, and prioritize quality metrics over quantity.

Software engineering

Use case: Developers rely on AI assistants for code suggestions and refactors.

- Risk: Overreliance increases cognitive load for code review and debugging; subtle bugs get propagated.

- Mitigation: Enforce review gates, pair AI suggestions with runbooks, and limit continuous usage during deep‑work periods.

Legal and compliance drafting

Use case: Lawyers use AI to draft contracts and analyze documents.

- Risk: Complacency and missed nuance; elevated risk exposure from unchecked drafts.

- Mitigation: Use AI as a first draft tool with mandatory human verification and explicit confidence indicators from models.

Customer support

Use case: AI triages tickets and proposes responses.

- Risk: Agents manage AI suggestions while handling calls, creating concurrent cognitive demands.

- Mitigation: Route complex cases to human-only queues and allow agents time between interactions for reflection and recovery.

Future predictions and expert recommendations

The next 24 months will shape whether AI becomes a sustainable productivity multiplier or a driver of systemic burnout.

- Rise of cognitive‑aware AI tooling: Expect features that measure and surface user cognitive load and recommend pacing, similar to health wearables but for attention.

- New procurement KPIs: Organizations will include employee wellbeing and error rates in vendor evaluation criteria.

- Policy and governance frameworks: Labor regulations will begin to consider AI‑driven work intensification; companies will need formal AI use policies.

- Human‑AI role redesign: Job descriptions will emphasize judgment and oversight over rote output; training programs will focus on AI literacy and cognitive resilience.

From a strategic perspective, firms that deliberately redesign workflows to align AI capabilities with human cognitive limits will capture disproportionate long‑term value.

Practical playbook for leaders

- Measure beyond speed: add quality, wellbeing, and rework metrics to dashboards.

- Set guardrails: limit continuous AI sessions, enforce review steps, and calibrate output expectations to sustainable levels.

- Train managers: teach leaders to interpret AI metrics and set humane productivity targets.

- Design for deep work: configure tools to reduce interruptions and support focused intervals.

- Prioritize explainability: surface model confidence and provenance so humans can validate AI outputs efficiently.

FAQ

Q: What is “AI brain fry” exactly?

A: It’s a practical term describing cognitive fatigue and burnout patterns linked to intensive, productivity‑driven use of AI — marked by attention fragmentation, decision fatigue, and a sense of being overwhelmed by rapid output expectations.

Q: Does AI cause burnout or just reveal existing problems?

A: Both. AI can expose preexisting process flaws (e.g., unrealistic targets) while also creating new stressors (constant iteration, validation burden). The combination amplifies risk.

Q: Should organizations stop using AI to avoid this problem?

A: No. AI delivers significant benefits, but it must be deployed with process redesign, human oversight, and wellbeing safeguards. Stopping AI wastes potential; mismanaging it creates harm.

Q: How can companies measure cognitive load related to AI?

A: Use mixed methods: self‑reported fatigue surveys, interaction telemetry (session lengths, prompt cycles), error/rework rates, and qualitative interviews to triangulate impact.

Q: What short-term steps can managers take this week?

A: Set reasonable target adjustments, mandate review steps for AI outputs, schedule uninterrupted “deep work” blocks, and solicit anonymous feedback on AI tool impact.

Conclusion

AI promises unprecedented productivity gains, but unchecked deployment risks turning a performance tool into a pathway for burnout. The key is intentionality: design AI interactions that respect human attention, measure outcomes comprehensively, and align incentives so speed does not trump sustainability. Organizations that embed cognitive safety into their AI strategy will not only protect employees — they will capture the durable competitive advantage that comes from high‑quality, resilient human‑AI collaboration.