When the Machine Writes Too Much: How AI Is Flooding Codebases and Fraying Developer Focus

The promise of generative AI in software engineering was simple and seductive: let the model draft routine functions, scaffold APIs, and auto-complete boilerplate so human engineers can work on higher-value design, architecture, and product thinking. The reality arriving in many engineering organizations looks messier. Instead of a gentle productivity bump, teams report a torrent of AI-generated code, a jumble of overlapping suggestions, and a rising tide of subtle bugs that are harder to trace. What began as augmentation risks becoming an overload—where the volume and variability of machine-produced code erode rather than enhance software quality.

This is not a minor workflow hiccup. The balance between automation and human oversight defines whether AI serves as a force multiplier for developers or as a multiplier for technical debt. Understanding why this shift is happening—and how organizations can respond—matters for product reliability, developer morale, and the economics of software engineering.

Not all code is created equal

AI code assistants excel at routine patterns: CRUD endpoints, client stubs, unit-test skeletons, and common algorithmic snippets. But quality varies. Models trained on vast public repositories can produce syntactically correct, compile-able code that still harbors logical errors, insecure defaults, licensing risks, or inefficient architectures. When dozens of such outputs enter a codebase, the cumulative effect can be unpredictable.

Two interacting dynamics amplify the problem:

– Quantity bias: AI systems are optimized to generate many plausible alternatives quickly. Developers confronted with multiple suggestions may accept the first reasonable-looking snippet without deep validation, increasing the chance of hidden defects.

– Context fragility: Large language models lack a full, up-to-date understanding of a project’s architecture, dependencies, and runtime contracts. They cannot reliably infer non-explicit business rules, deployment constraints, or cross-module invariants.

Together, these dynamics create an ecosystem where more code does not equal better code.

How AI shifts developer workflows—and stress

The early narrative around AI-assisted development framed models as accelerators that freed engineers from repetitive tasks. But acceleration without better guardrails accelerates errors as well. Developers now find themselves toggling between many roles at once: prompt engineers crafting precise instructions, reviewers digging into machine-suggested merges, and incident responders tracing bugs introduced by generated code.

The cognitive load increases in at least three ways:

1. Decision fatigue: Choosing among multiple AI suggestions and deciding when to override defaults consumes time and mental bandwidth.

2. Review expansion: Code review meetings spend more cycles validating generated code paths, often requiring deeper integration testing rather than simple lint checks.

3. Ownership ambiguity: When a model writes a function that later fails in production, teams grapple with responsibility—was this developer negligence, model hallucination, or a failure in tooling?

These stresses have knock-on effects: slower release cadences, burnout risk among senior engineers who now shoulder more quality assurance, and a potential devaluation of junior roles if AI handles initial drafts but leaves complex integration work to experienced staff.

Risk vectors: bugs, security, and licensing

The proliferation of machine-written code opens multiple risk channels:

– Subtle bugs: Generated code can contain off-by-one errors, incorrect boundary conditions, or assumptions about input shapes that only manifest under specific load or data conditions.

– Security flaws: Defaults that skip input sanitization, rely on outdated cryptographic primitives, or expose sensitive logic can introduce vulnerabilities at scale.

– Licensing exposure: Models trained on public repositories may reproduce licensed snippets in ways that create compliance issues for downstream users.

– Maintainability debt: If generated code uses obscure patterns or inconsistent styles, it increases the future cost of modification and debugging.

Each issue matters not only technically but legally and financially, especially for businesses operating at scale or in regulated sectors.

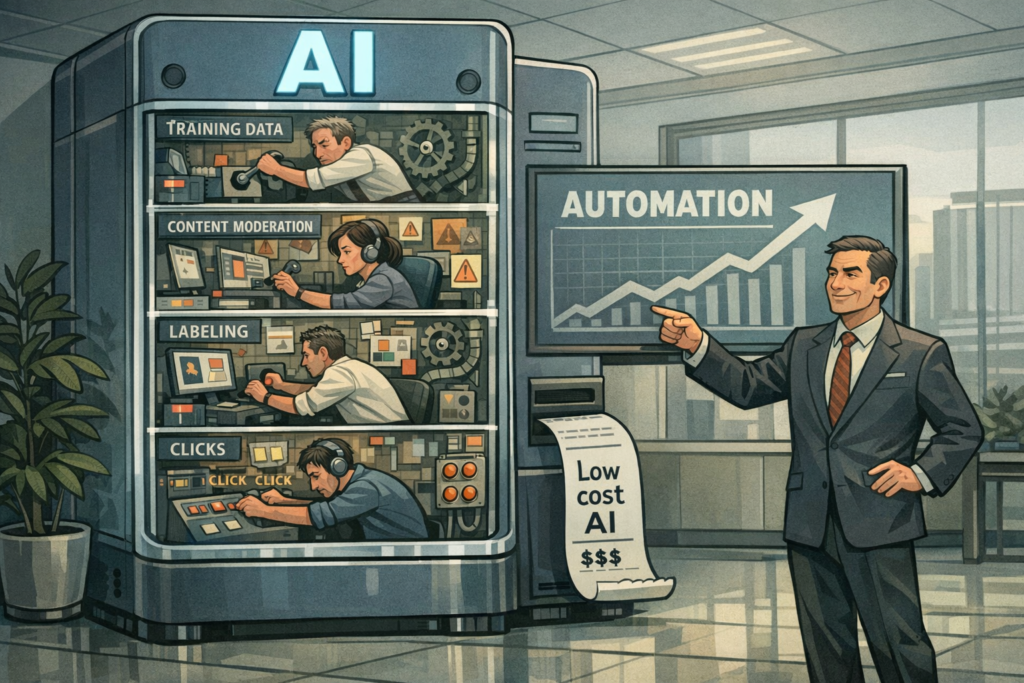

Strategic context: a fast-evolving competitive landscape

Major cloud vendors and development tools companies are racing to integrate richer AI capabilities into IDEs, CI/CD, and infrastructure-as-code tooling. This competition is rapidly commoditizing certain developer tasks while differentiating on integrations, model quality, and enterprise governance features.

Startups and incumbents are taking diverging approaches:

– Platform players prioritize seamless integration with existing pipelines and enterprise controls (audit trails, approvals, policy enforcement).

– Niche startups aim to specialize—offering domain-tuned models for security, fintech, or healthcare codebases, or building sophisticated test-generation tools that complement code suggestions.

– Open-source projects push for model transparency and reproducibility, raising expectations around provenance and auditability of generated outputs.

How organizations select among these options will influence whether AI’s impact amplifies productivity or complexity.

Tools, processes, and the new engineering stack

To navigate the overload, teams are building a new engineering stack centered on governance and observability rather than raw generation. Effective components include:

– Policy-driven generators: Limit models to approved libraries, frameworks, and coding conventions, reducing variance.

– Automated vetting pipelines: Integrate static analysis, security scanners, and property-based tests into the suggestion-evaluation loop before code reaches review.

– Explainability layers: Provide provenance metadata (training data signals, prompt history, confidence estimates) so reviewers can judge when to trust a suggestion.

– Role redesign: Elevate human reviewers into curators who validate behavior and system-level constraints rather than line-by-line syntax checks.

These additions slow down the “instant suggestion” narrative but increase overall system safety—transforming AI from a draft machine into a validated collaborator.

Regulatory and business consequences

As AI code generation becomes a systemic part of software production, regulators and customers will demand stronger assurances. Potential developments to watch:

– Liability frameworks: Courts and regulators may need to clarify responsibility for defects caused by AI-generated code, influencing indemnity and insurance for software vendors.

– Certification standards: Industries like healthcare and transportation could require certified model pipelines and traceability before AI-produced code is allowed in production systems.

– Procurement shifts: Enterprises may prefer vendors that offer traceable, auditable AI pipelines and robust guardrails over those with raw generation capabilities.

These shifts will favor vendors that can demonstrate governance and accountability, not just clever suggestion engines.

Three plausible futures

1. Maturation and containment: Tooling advances—better context-aware models, integrated vetting, and enterprise controls—mitigate overload. AI becomes a reliable assistant that reduces routine defects and frees engineers for complex work.

2. Fragmentation and specialization: Domain-specific models and regulatory pressures split the market. High-assurance sectors adopt restrictive, certified tools; commodity apps use more permissive systems, producing uneven quality across industries.

3. Systemic complexity and regulatory backlash: Widespread reliability incidents or security breaches traced to AI-generated code prompt stricter regulations, slowing adoption and forcing a re-evaluation of human-in-the-loop requirements.

Each path requires different strategic responses from engineering leaders and vendors.

Practical playbook for engineering leaders

For teams wrestling with AI-induced code overload, pragmatic steps reduce risk while preserving value:

– Treat AI outputs as engineering assets, not magic. Require provenance, attach confidence scores, and enforce pre-merge verification.

– Expand automated testing to include scenario-driven and property-based tests that target model hallucination modes.

– Build clear ownership models: define who validates suggestions, who merges, and who is accountable for production incidents.

– Invest in developer training that covers prompt engineering, AI failure modes, and how to audit generated code.

– Measure shift-left metrics: time spent in review, defect density attributable to generated code, and the false-positive/false-negative rates of automated vetting.

These measures transform ad hoc adoption into institutional capability.

Closing thought: steering AI’s dual-edge

Generative AI will continue to reshape how software is written. The question for organizations is not whether to use it, but how to steer it. Left unmanaged, the flood of machine-produced code will amplify bugs and erode developer focus. Handled with deliberate governance, tooling, and human oversight, AI can shrink mundane toil and let engineers focus on architecture, product differentiation, and system resilience.

The imperative is urgent: build the scaffolding now—test harnesses, governance policies, and cultural norms that require accountability—so that AI’s promise becomes a sustained productivity gain rather than a temporary acceleration toward greater complexity. The future of software will be co-authored by humans and machines; making that co-authorship constructive is the core leadership challenge of the moment.