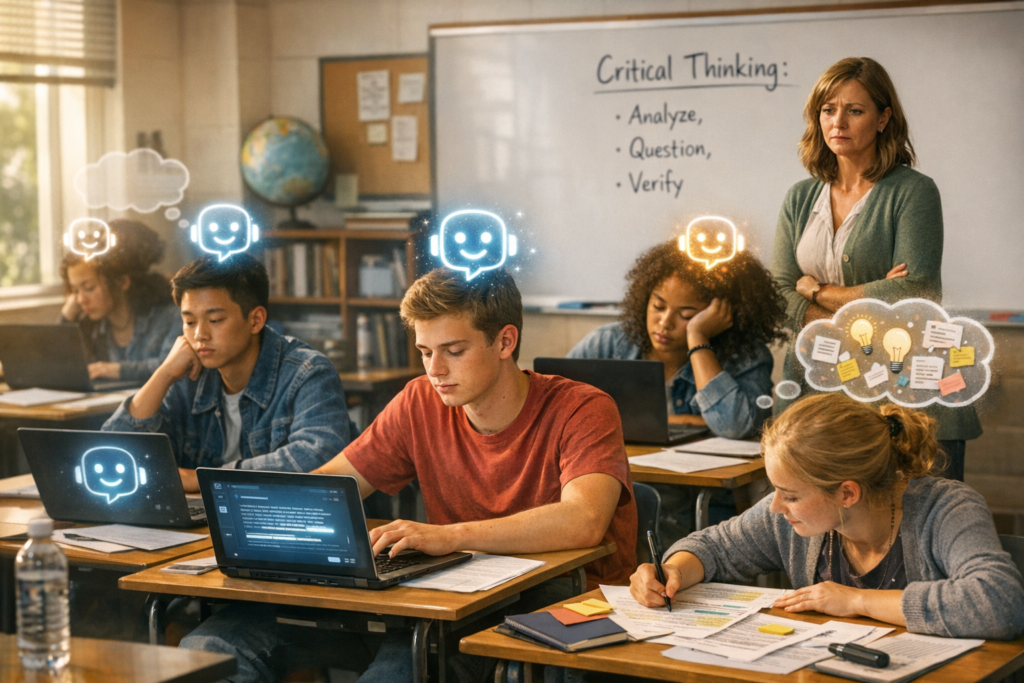

Classrooms are changing faster than the policies meant to govern them. Generative AI tools like ChatGPT and Bard, integrated into student workflows and learning management systems, promise efficiency, personalization, and fresh pedagogical possibilities. Yet their rise is exposing a less discussed but consequential problem: an erosion of students’ capacity for independent critical thinking. This trend is not inevitable — it’s the product of design decisions, incentive structures, and educational practices that have failed to adapt to a fundamentally new kind of assistant.

When convenience becomes cognitive shortcut

At first glance, AI in education feels like a boon. It drafts essays, clarifies concepts, generates practice problems, and offers rapid feedback — tasks that can relieve teachers of routine labor. But these same capabilities can also encourage cognitive offloading: students outsource reasoning to models whose outputs can be persuasive without being sound. The result is a pattern where answers are accepted because they are readily available and well‑phrased, not because they have been scrutinized.

This behavior is amplified by two intersecting factors. First, generative models are optimized to produce fluent, coherent text. Fluency often masquerades as correctness. Second, classroom incentives remain skewed toward product over process — grades reward finished deliverables more than the messy work of argument development, hypothesis testing, or source evaluation. Together, they create an environment where speed and polish trump intellectual rigor.

The invisible curriculum: what students actually learn

There’s an invisible curriculum operating beneath lesson plans: students learn what behaviors produce results. If using an AI assistant reliably produces acceptable assignments with less effort, it becomes the rational strategy. Over time, that changes the skills being practiced. Instead of honing the iterative movements of problem finding, framing, and critique, learners perfect prompt engineering and surface-checking — useful skills, but not substitutes for deep analytical reasoning.

Industry forces shaping classroom outcomes

The tech ecosystem is converging on education as a high-impact market. Large AI firms are integrating conversational models into productivity suites and education platforms. Edtech startups are building AI tutors, answer-checkers, and automated graders. The competitive pressure to deliver immediate utility drives product features that prioritize answer generation and rapid feedback loops.

From a business perspective, that makes sense: products that deliver clear, tangible benefits are easier to sell to school districts under budget constraints and politically charged timelines. But market incentives do not inherently prioritize pedagogical integrity. If models increase engagement metrics or cut teacher workload, they will proliferate — even if the long-term learning outcomes they produce are shallow.

Data, privacy, and the feedback loop

Another industry dynamic is the data feedback loop. Models improve with usage data, and student interactions become training fodder for future iterations. That yields better performance but also entrenches existing patterns of use. If students primarily use AI for drafting essays or solving homework, models will get better at those tasks while still offering little to no calibration for fostering critical thinking. Privacy and consent concerns complicate this picture: districts must balance the pedagogical promise of adaptive learning analytics with legal and ethical obligations around student data.

Risks beyond the classroom

The educational consequences have social and economic ripple effects. Employers and higher‑education institutions rely on schooling to certify competencies: reasoning, problem solving, and independent judgment. If these skills erode, credential inflation intensifies — degrees signal less about ability and more about completion in a world where work can be automated. That raises questions about long-term workforce readiness and the fairness of assessments that don’t capture true capability.

There are also equity concerns. Students with access to high‑quality instruction about how to use AI thoughtfully will benefit disproportionately. Conversely, those in underfunded schools may receive AI tools without the accompanying curricular redesign or teacher training, deepening existing achievement gaps.

Designing AI that cultivates reasoning

Not all AI use in education is corrosive. Well‑designed tools can amplify higher-order thinking. The difference lies in design intent: are systems meant to produce answers or to scaffold reasoning? The next generation of edtech must prioritize the latter.

Practical design directions include:

- Scaffolded reasoning prompts that require students to show steps, justify choices, and critique outputs rather than accepting responses verbatim.

- Explainable AI features that reveal confidence levels, source attributions, and chains of thought so students can interrogate model reasoning.

- Socratic tutoring modes that ask probing questions instead of offering direct solutions, nudging learners toward metacognitive reflection.

- Adaptive assessment that measures process — the strategies used to reach an answer — not just final products.

When platforms make reasoning visible and assessable, they change incentives. Students must learn to reason because reasoning becomes part of the measurable outcome, not just an invisible habit the teacher hopes they acquire.

Teacher training as linchpin

Teachers remain the most important factor in whether AI helps or harms learning. Professional development must shift from simple tool demonstrations to deep pedagogical guidance: how to design assignments that are AI‑resistant (or AI‑integrative in productive ways), how to teach prompt literacy, how to use AI as a conversational partner that models argumentative structure, and how to assess process vs. product. Investment in teacher capacity is non‑negotiable if AI is to be harnessed for cognitive growth.

Policy levers and institutional choices

Districts and universities face immediate choices that will shape long‑term outcomes. Blanket bans are a blunt instrument that often fail and push usage underground. Conversely, laissez‑faire adoption reproduces current risks. A calibrated approach includes:

- Clear academic integrity policies that distinguish between acceptable assistance and academic misconduct.

- Assessment redesign that emphasizes in‑class, oral, or project‑based evaluations which are harder to outsource and better at assessing reasoning.

- Procurement standards for vendors that require transparency, explainability, and data protection safeguards.

Public funding can incentivize platforms that prioritize pedagogical goals over engagement metrics, and regulatory standards can define minimum explainability and privacy requirements for educational AI.

Three plausible trajectories

How this plays out depends on choices made now.

Path A — Convenience Consolidation: Schools prioritize short-term gains. AI accelerates assignment completion but critical thinking atrophies. Credential value declines; remedial training becomes common in higher education and the workforce.

Path B — Hybrid Reinvention: Districts and universities redesign curricula and assessments. AI is embedded as a Socratic tool; teachers receive robust training. Critical thinking is redefined to include AI literacy, and students learn to interrogate machine outputs alongside human sources.

Path C — Fragmented Experimentation: Uneven adoption generates patchwork outcomes. Wealthier institutions adopt best practices; others lag. Inequities deepen, and the national picture of education becomes more heterogeneous.

Technical innovations to watch

Several nascent technologies could shift the balance toward productive uses of AI. Models that expose chain‑of‑thought or provenance can make machine reasoning inspectable. Systems that generate adversarial prompts to test student robustness can turn AI into a tool for critical assessment. And hybrid human‑AI workflows where teachers curate model outputs — not just accept them — can combine efficiency with oversight.

However, the industry must address fundamental limits: current generative models can hallucinate, reflect data biases, and lack true subject-matter judgment. Until these weaknesses are mitigated or explicitly surfaced to users, educators must assume outputs are provisional and build practices that require verification.

Where to from here?

AI in classrooms is neither inherently liberating nor inherently corrosive. It is a new kind of educational agent whose effects will be determined by how we design systems, align incentives, and teach people to use them. The central challenge for policymakers, educators, and edtech leaders is to nudge practices toward a future where AI amplifies human critical thinking rather than erodes it.

That will require reimagining assessment, investing in teacher expertise, demanding transparency from vendors, and designing tools that prize reasoning as a first-order outcome. If those choices are deferred, convenience will calcify into expectation, and generations could graduate with polished outputs but impoverished powers of judgment. If they are embraced, we have an opportunity to upgrade schooling for an AI‑augmented world: not by outsourcing thought, but by making reasoning visible, teachable, and central to what education promises.